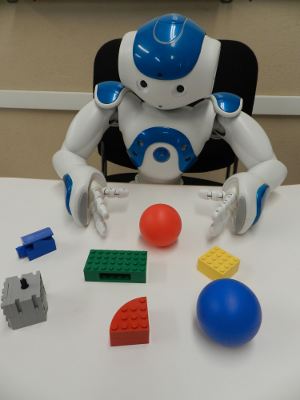

The goal of this research work is the development of novel algorithms for the coordination of visual control modules for a humanoid robot, within a decision making framework. The idea is to represent the robot visual system in a hierarchical and structured manner, where each level or module represents a specific visual task defined in a decision making framework in order to reduce the uncertainty associated with that module. By reducing uncertainty per module we expect to increase the reliability and efficiency of the whole visual system. These modules can be analysed and handled independently, but at the end they will be coordinated in order for the robot to perform more complex tasks (e.g., object manipulation). We are interested in the following visual modules:

(1) Object recognition (which includes object detection and localisation), (2) 3D object reconstruction, and (3) gaze control in order to decide where to look. Another aim is to implement these modules in a real robot with limited processing power and trying to optimise the robot's response time.